This is somewhat controversial. Some feel that a quality lens should not require further software correction. In fact, the lack of geometric distortion is a traditional sign of a high quality lens.

I think that this is mostly a non-issue. By allowing some aspects of the image to be adjusted in software, the lens designers can focus on issues which cannot be corrected in post processing. This has the potential of making the lenses better, at a smaller size, and potentially a smaller cost. Panasonic Micro Four Thirds lenses are adjusted for geometric distortion and some chromatic aberrations. The geometric distortion is also corrected in Olympus Micro Four Thirds cameras. At this time, though, Olympus does not correct chromatic aberrations.

To illustrate the geometric distortion done with various lenses, I have photographed a tiled wall with them, and shown the sensor output compared with the corrected JPEG output.

Here is an example pair from the Lumix G 20mm f/1.7 pancake lens:

uncorrected RAW output | JPEG image |

Note that this is in no way a criticism of using RAW images. There are many RAW image converters which will do the distortion correction automatically and seamlessly, and you will never notice that there was any geometric adjustment done at all. I am using the RAW images to visualize the initial image captured by the sensor, as it is the only way to access it.

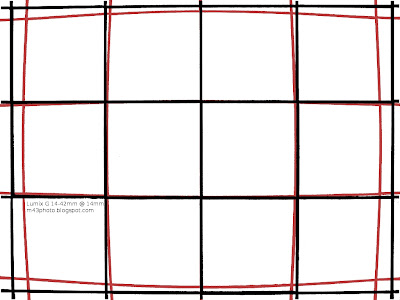

Here is a comparison of the uncorrected and corrected images for some lenses. Since I am only interested in the geometric distortion, I have increased the contrast so that the images become monochrome. I also superimposed the corrected out of camera images (black) onto the original uncorrected images (red).

I have also included the appropriate adjustment needed. The adjustment numbers in percent refers to the "Lens Distortion" filter in The Gimp.

Lumix G 20mm f/1.7: -11%

Lumix G 14mm f/2.5: -16%

Lumix G 14-42mm f/3.5-5.6 @ 14mm: -18%

Lumix G 14-42mm f/3.5-5.6 @ 30mm: 0%

Lumix G 14-140mm f/4-5.8 @ 14mm: -17%

Lumix G 14-140mm f/4-5.8 @ 30mm: -4%

Lumix G 45-200mm f/4-5.6 @ 45mm: +1%

Conclusion

Normal zoom lenses pretty consistently feature barrel distortion in the wide end. The tele zoom Lumix G 45-200mm appears to have some very small pincushion distortion, but very minor.

Some lenses that do not feature any geometric distortion correction are the Lumix 8mm f/3.5 fisheye and Panasonic Leica Lumix DG Macro-Elmarit 45mm f/2.8 1:1 Macro lens.

What happens in video mode(GH2)? Would be interesting to be able to turn it on and off depending if you want a little fisheye or don't.

ReplyDeleteThe geometric correction is also done in video mode. It is not possible to turn off on the camera, neither in still image or video mode.

ReplyDeleteThe only way to see the actual effect, is to develop the image from the RAW file, with a program that allows for not doing the geometric distortion. (I used UFRAW.)

If I understood when we shoot RAW (GH1 for example) no correction is applied... Is it possible to apply the correction in-camera? If not, we have to take extra care when shooting RAW, and the geometric correction must be applied in post...

ReplyDeleteWhat happens when these lenses are mounted on an Olympus m4/3 body?

ReplyDeleteIf you shoot RAW images, then the correction is not done in the RAW file. However, the RAW file contains information about the required geometric distortion correction needed. Many RAW converter programs will use this automatically, and let you work on the corrected image.

ReplyDeleteSo for most purposes, this automatic distortion correction is no problem at all. You may only see it if you open the RAW file in some third party RAW converters.

Olympus cameras also correct for geometric distortion.

That's the reason why I'm not interested at all about many of these current M43 lenses like this overhyped Lumix G HD 14-140mm:

ReplyDeleteDistortion is really extreme (as it isn't any fisheye lens) at wide angle and heavy software correction eats away resolution outside center area of frame. In tele end softer edges wouldn't matter that much as target is often small and in center but wide angle end would be used for wider landscapes and such.

For comparison Four Thirds Panasonic Leica D 14-150mm has lot better optical corrections (remember that also hefty CA is software corrected in this 14-140mm) and while of course not free from distortion at wide angle its level is lot smaller and if needed correcting it doesn't loose as much sharpness and FOV.

The Lumix G 8mm f/3.5 fisheye and Leica-Lumix 45mm f/2.8 macro have no software geometric distortion correction.

ReplyDeleteBased on your comment, it sound like you might not be very interested in the fisheye lens. But it is actually a very sharp lens. The fisheye perspective makes it hard to use, though. There's also some CA's, which are corrected by software.

From Snowflake

ReplyDeleteThe proof in the pudding is in the tasting. If the final image looks bad then it fails the test.

I for one have not observed the loss of detail near the edge of any of the lenses when printed on 11x17 sized prints. Perhaps if I had a better printer I’d see the effects, but I believe it is the software in the camera that is making the difference. Those pixels that are “lost” are replaced using smart software.

There are real advantages to allowing a bit of freedom in image projection.

1. Cheaper lens

2. Lighter lens

3. Less chromatic effects

4. Increased detail captured.

The last improvement probably needs some clarification since it is an opposite effect mentioned in the article.

A photon hitting the center of the sensor does so at a 90-degree angle. A photon hitting the edge of the sensor does so at an oblique angle. The number of photons per square mm near the edge of the sensor is less than the overall photons captured in the center of the sensor. Compressing the image near the edge of the sensor helps preserve sensitivity and maintain resolution, or photon density per square mm of sensor surface.

The best solution would be to make spherical sensors that flexed to the lens used, rather than trying to force images to be resolved on a flat plane. The problem is not the lens design, it is the sensor design.

Yes, I agree with you, as I also wrote in the article text. Allowing for post geometric distortion correction allows the lens designers to optimize other aspects of the lens.

ReplyDeleteAnd, coming from Pentax, my images are significantly sharper after switching to m4/3.

Advances in microlenses and now also backside illumination have helped to prevent shadowing of pixel's actual light gathering surface. And if anything M4/3 is mirrorless system least affected by oblique angle photons because of smaller sensor than APS-C. Sony NEX also has even smaller flange back distance.

ReplyDeleteAnd surely good part of that 14-140mm's "10% smaller and lighter than company's previous equivalent lens" size (like said in release announcement) comes from the fact that without mirror in the way of things smaller flange back distance allows lot less extreme retrofocal design. (4/3's flange back distance is very big compared to sensor size making its wide angles big)

So before saying that heavy software correction of everything is needed for getting well balanced performance in reasonable size read this:

http://www.photozone.de/olympus--four-thirds-lens-tests/455-leica_14150_3856?start=1

Sure for pocket PEN shooters bigger compromising of optical corrections allows smaller lenses more in balance with the body but I'm after good real ergonomy and proper direct controls body and high quality lenses with software corrections used as last touch instead of all around solution for cropping available FOV.

I have watched the complaints about using software to correct for lens distortions as if an inferior product is being sold.

ReplyDeleteThis is not true.

In fact, the product is in many ways superior. Given the same amount of engineering for a “distorted” lens and a “flat” lens, the “distorted” lens will have Better CA, better resolution, and brighter edges, and focus more sharply over greater distance ranges. Of course, Software is necessary.

The most common complaint is that photons are being lost by cropping.

Are photons being lost by cropping?

Yes, but photons are also lost when one tries to force a flat image on a flat plane. It really doesn’t make any difference if the loss occurs in the lens or on the sensor. (Ignoring the additional loss from photons hitting obliquely on the sensor and therefore are more likely to be lost in the gaps of the sensor or be reflected. which was mentioned earlier )

Lets look at what the lens does. The natural or “purest” and most accurate image a lens could produce would resolve on a spherical surface, like the retina in the eye. Photon distribution is evenly spread out from all parts of the lens.

Now when the image is forced to focus on flat plane A camera lens must shape this spherical cone to resolve on a flat surface. Optically the edges of the light cone is “distorted” to focus at a further distance than at the center of the sensor.

This process reduces the number of photons that hit the edges of the sensor, compared to the center of the sensor. This reduction occurs because the density of the photons hitting the surface varies to the inverse square of the distance the image is resolved. For example, If the edges of the sensor were twice as far away from the focal center of the lens, compared to the center of the sensor, then number of photons hitting the edges of the sensor would be reduced by a quarter per square area measure on the sensor. These are “lost” photons.

Eventually some camera manufacture is going to make the brave step and make a spherical shaped sensor.

Does lightroom(3.3) automatically correct the 20mm 1.7? I've not been able to figure it out yet. thanks and LOVE the blog.

ReplyDeleteI haven't used the program Lightroom, but I believe all serious, commercial programs do this geometric distortion correction.

ReplyDeleteBall Lightning: Lightroom automatically corrects every Micro Four Thirds lens. The lenses have the correction parameters built-in, these are saved to the RAW file, and Lightroom gets the parameters from the RAW file.

ReplyDeleteSo in fact, today's Lightroom is perfectly capable of correcting Micro Four Thirds lenses that haven't been released yes. For example, I recently bought the just-released Olympus 12mm f/2.0 lens and Lightroom handled it perfectly, without any need for software upgrades or similar. Heck, I didn't even think of that until now—it's all just seamless.

Do you know if it is possible on the GH2(like for the Canon 5D II or the nikon's... to "record" new lenses with the ratio of correction that we want and or to use a system like "lensalign pro" (http://www.imaging-resource.com/ACCS/LA/LA.HTM)

ReplyDelete???

thanks

jssteinberger@yahoo.com

I'm not familiar with this. I don't think it is needed, since the lenses are already corrected for when using out of camera JPEG images. And if you use the supplied RAW image processing program, you also get this correction automatically.

ReplyDeleteThe downfall of Lumix geometric distortion correction is the sharpening filter used to compensate for the softening effect of the correction filter. This artificially enhances the aliasing artifacts produced by the image sensor, making image edge details excessively sharp. You can actually see the aliasing artifacts shimmer in the LCD as the lens locks into focus. While it's a manageable drawback for still photography, the over-sharpened edge details force the video encoder to devote an excessive amount of bitrate to encode them, leaving less bitrate available to encode lower-contrast details.

ReplyDelete